Nut/OS Events

This paper provides an overview of Nut/OS event handling internals.

Basically Nut/OS thread scheduling can be understood as handling events by moving threads among queues. A thread waiting for an event is blocked by moving it from a global ready-to-run queue to a queue, that is expected to receive this event.

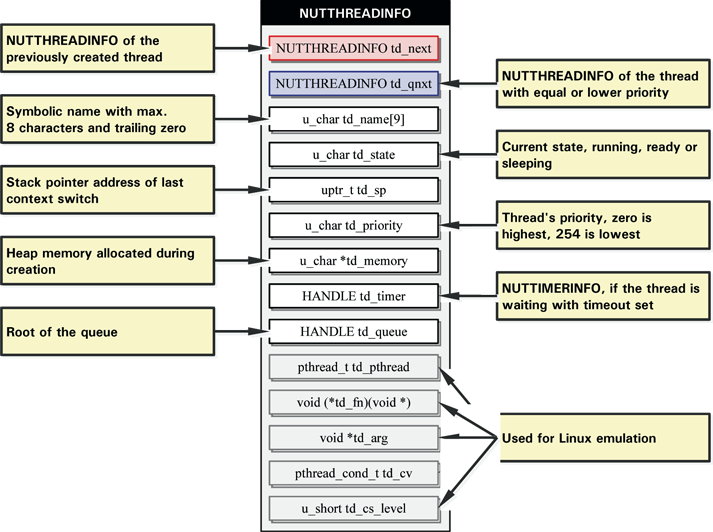

Linked Lists of Threads

During system initialization, the idle thread is created first, which in turn creates the main application thread. Depending on the Nut/OS components used by the application, the system may create additional threads like the DHCP client or the Ethernet receiver thread. Applications can create more threads by calling

HANDLE NutThreadCreate(u_char * name, void (*fn) (void *), void *arg, size_t stackSize);

When successful, NutThreadCreate returns a handle, in fact

a pointer to the NUTTHREADINFO structure of the new thread.

Each time, when Nut/OS creates a new thread, a NUTTHREADINFO

structure is allocated from heap memory and and added in front of a

linked list containing all existing threads. The global pointer

nutThreadList points to the first entry.

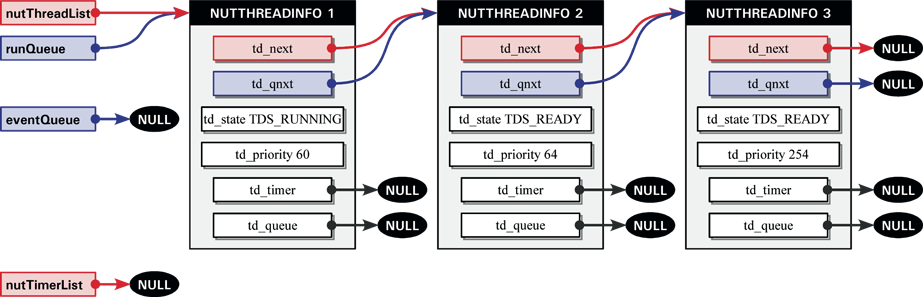

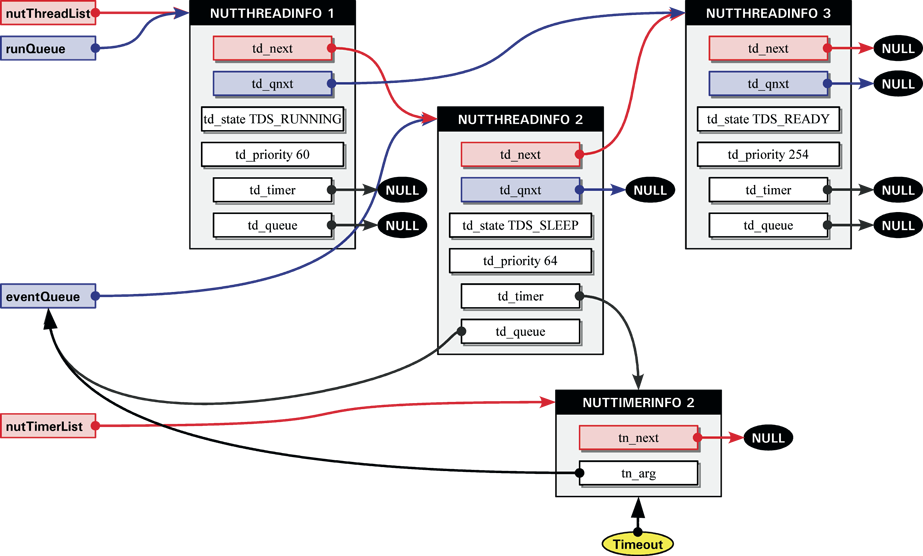

To keep things simple, the following diagrams will show the relevant structure members only. Furthermore, let's assume, that only three threads have been created, the idle thread, the main application thread and an additional thread created by the application. This will result in the following list of threads.

The pointer nutThreadList points to a list, which contains

all three threads. This list is linked by the structure element

td_next (red colored links). The last NUTTHREADINFO

structure is always the one of the idle thread.

We notice, that there are more lists. The global pointer runQueue

points to the list of all threads, which are ready to run and linked by

td_qnxt (blue colored links). In opposite

to nutThreadList, new entries are not simply added to the front.

This list is always sorted by the value of td_priority. In Nut/OS,

low values mean high priority. The idle thread is running at lowest

priority 254. Again to keep the follwing diagrams simple, the priority

order is the same as the list of all threads. In reality this is

usually not the case.

Waiting for an Event

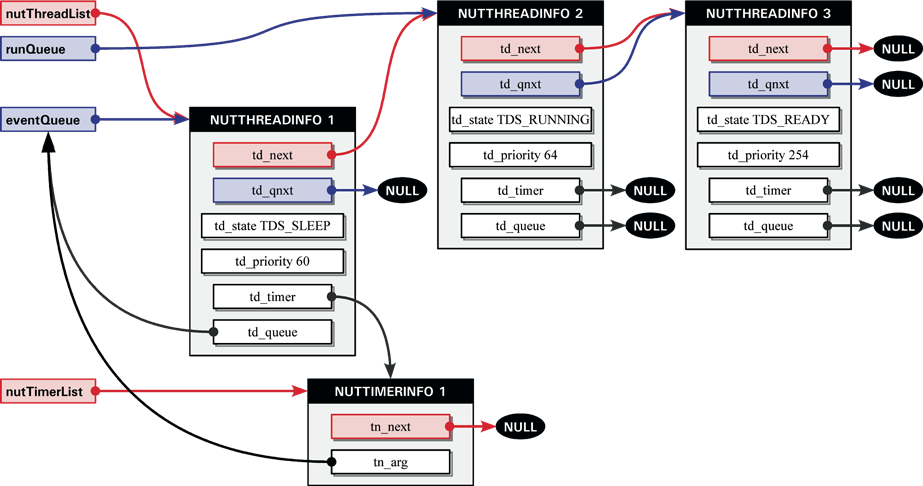

The runQueue does not always contain all existing threads, but

only

those which are ready to run. One of its entries must have the state

TDS_RUNNING and all remaining entries in this queue are in state

TDS_READY. Threads with state TDS_SLEEP will never be

part of

the runQueue.

If a thread directly or indirectly calls

int NutEventWait(HANDLE * qhp, u_long ms);

NUTTHREADINFO structure will be removed from

this list of ready-to-run threads.

The first parameter of NutEventWait is a pointer to a pointer to

a linked list (HANDLE is defined as a void pointer). This parameter is

used in a similar way as runQueue, but instead of listing all

ready-to-run threads, it contains a list of threads waiting for a specific

event. In our example the thread with the highest priority called

HANDLE eventqueue = 0; NutEventWait(&eventQueue, 1000);

runQueue and added to eventQueue.

Internally, NutEventWait executes

NutThreadRemoveQueue(runningThread, &runQueue); runningThread->td_state = TDS_SLEEP; NutThreadAddPriQueue(runningThread, (NUTTHREADINFO **) qhp);

runQueue,

change its state from TD_RUNNING to TDS_SLEEP

and to add it to the eventQueue.

The second parameter of NutEventWait specifies the maximum

time the thread is willing to wait for an event posted to the queue.

If this parameter is zero, the thread will wait without time limit.

Otherwise it is interpreted as the number of milliseconds to wait and

Nut/OS will create a timer in this case.

Like threads, timers are created by allocating a NUTTIMERINFO from

heap memory and adding it to a linked list. The global pointer nutTimerList

points to the first entry and following entries are linked by the

pointer tn_next, which is a member of NUTTIMERINFO.

If a timeout is specified, NutEventWait calls

HANDLE NutTimerStart(u_long ms, void (*callback) (HANDLE, void *), void *arg, u_char flags);

NUTTIMERINFO structure and adds it to nutTimerList.

We will discuss the situation in case of a time out later in more detail.

Finally, NutEventWait calls

NutThreadSwitch();

runQueue from TD_READY to

TD_RUNNING and loads the CPU registers for the stack of

this thread.

In this document I will not describe in detail, how Nut/OS switches

from one to another thread. In fact, NutEventWait will not return

immediately, because the CPU starts execution of the second thread.

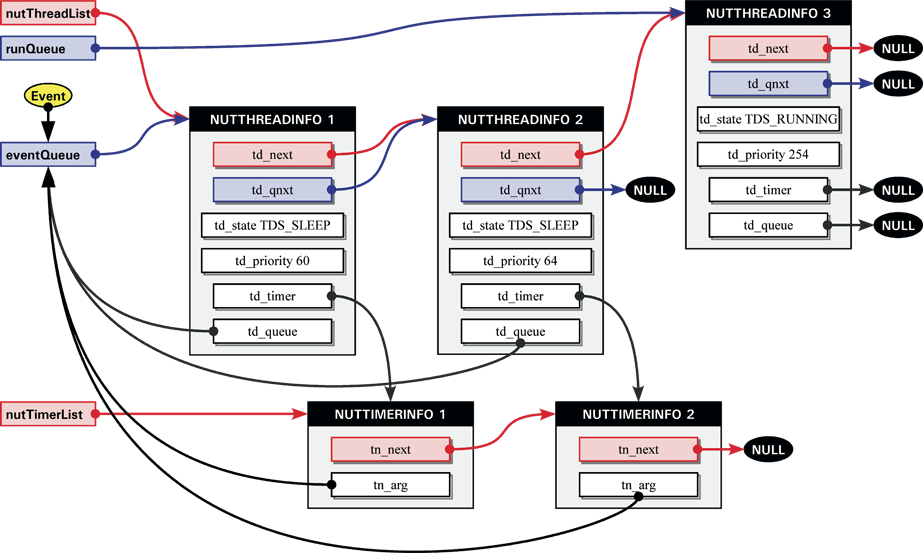

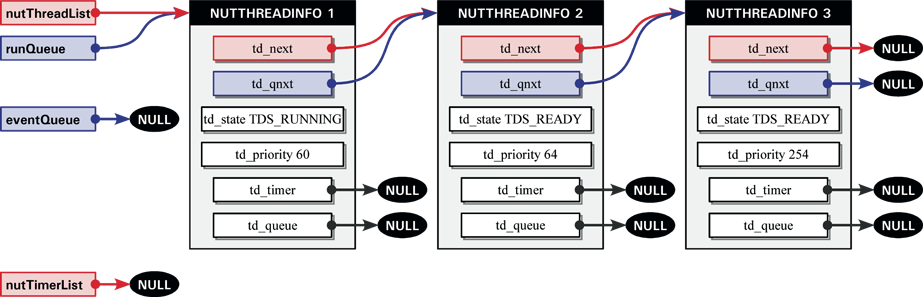

Let's assume, that our second thread directly or indirectly calls NutEventWait

too on the same eventQueue.

NutEventWait(&eventQueue, 1000);

NUTTHREADINFO structure from the runQueue to this

eventQueue, does the required updates of td_state,

creates another timer and finally passes control to the last thread.

As described above, the last thread is the idle thread, which will never

be removed from the runQueue. It serves as a placeholder during

times,

when all worker threads are sleeping. It keeps the CPU busy by calling

NutThreadYield in an endless loop. As soon as another thread

becomes

ready to run, the idle thread will lose CPU control. In our case, this may

happen as soon as an event is posted to the eventQueue.

Posting an Event

In order to wake up a thread waiting in an event queue, a thread calls

int NutEventPost(HANDLE volatile *qhp);

NUTTHREADINFO structure in front of this

priority ordered linked list to the runQueue. As we already know,

the runQueue is also ordered by priority. If the thread, which

called NutEventPost, has a lower priority than the woken up

thread,

CPU control is passed to the latter.

Alternatively a thread may call

int NutEventBroadcast(HANDLE * qhp);

runQueue.

In our given example, only the idle thread is left.

When the idle thread is doing nothing except calling NutThreadYield

in

a loop, who is posting an event while both worker threads are sleeping? Well,

there are two special calls, which can be called from within interrupt

routines.

int NutEventPostAsync(HANDLE volatile *qhp); int NutEventBroadcastAsync(HANDLE * qhp);

NutEventPost and NutEventPostAsync

is,

that Nut/OS will move the NUTTHREADINFO structures and update the

td_state values, but will not switch the CPU control. This is

actually

done when the idle thread calls NutThreadYield. Nut/OS provides

cooperative

multithreading, which means, that a thread can rely on not losing CPU control

without calling specific system function which may change its state. However,

interrupts are preemptive in any case. By delaying the context switch,

Nut/OS ensures, that cooperative multithreading is maintained even when

interrupts are able to wake up sleeping threads.

So far let's assume, that in our example some kind of smart interrupt

routine posts an event to eventQueue by calling

NutEventPostAsync(&eventQueue);

runQueue.

If an event is posted to the eventQueue before the timer elapses,

then the NUTTIMERINFO will be removed from the nutTimerList

by a call to

NutTimerStopAsync(td->td_timer);

Event Timeout

In detail, NutEventWait calls

td_timer = NutTimerStart(ms, NutEventTimeout, (void *) qhp, TM_ONESHOT);

nutTimerList

after the timer elapsed.

Obviously the most interesting parameter is the callback routine

void NutEventTimeout(HANDLE timer, void *arg);

NUTTIMERINFO). In our example, the timer handle is the same,

that had been

previously stored in td_timer and the arg points to

eventQueue.

The NutEventTimeout will walk through this queue, searching for

the

NUTTHREADINFO structure that contains a td_timer with

the same

timer handle. If it is not found, the routine doesn't care. It simply means,

that an event already removed the thread from the queue. Nothing else can be

done,

because the Nut/OS timer handling will automatically remove oneshot timers.

If it is found, td_timer will be cleared and the NUTTHREADINFO

structure will be moved to the runQueue.

Remember, that NutEventTimeout is running in interrupt context. So

it

will not perform any thread switching. As soon as the running thread calls

any such function, a thread switch may occur. In our example, this will

not happen, because the currently running thread got a higher priority.

Later on, CPU control will be (hopefully) passed to the second thread, which

then

continous to execute NutEventWait. This routine will check whether

it had

been called with a timeout value and td_timer had been cleared to

zero.

In this case it returns -1 to inform the caller, that a time out occured.

Otherwise

zero will be returned.

Problem Discussion

At the time of this writing, Nut/OS is at version 3.9.2.

Problem 1: Not yet verfied, but it looks like events are sometimes

lost

when a timeout value has been specified. With most applications this is no

real problem, because the timeout will avoid complete blocking of the

thread.

Problem 2: Several variables and parameters are marked volatile.

However,

cooperative multithreading requires a volatile attribute for variables only,

if they are modified in interrupt routines.

Harald Kipp

Herne, October 9th, 2004.